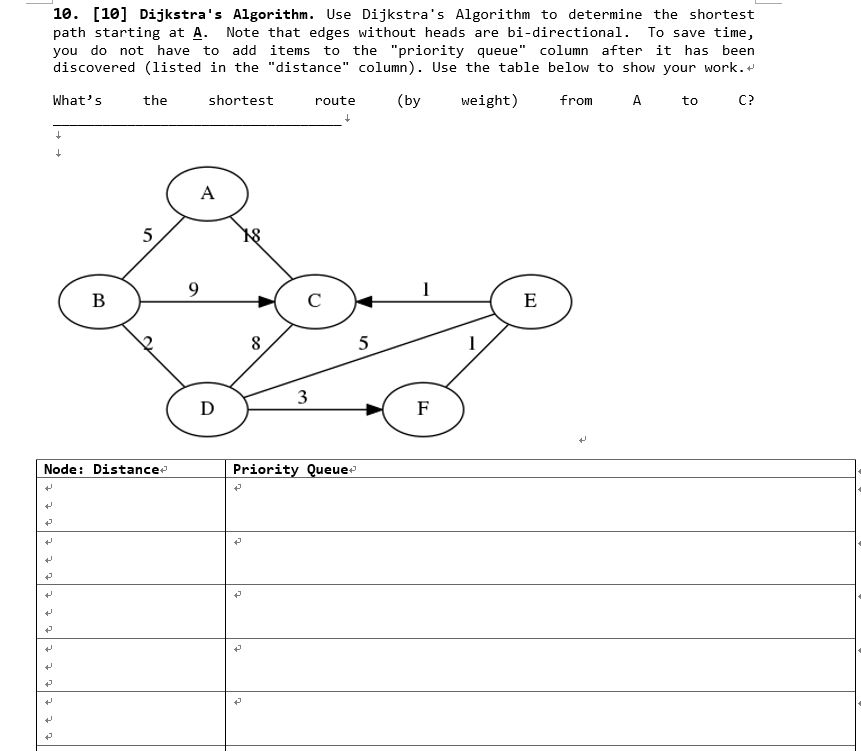

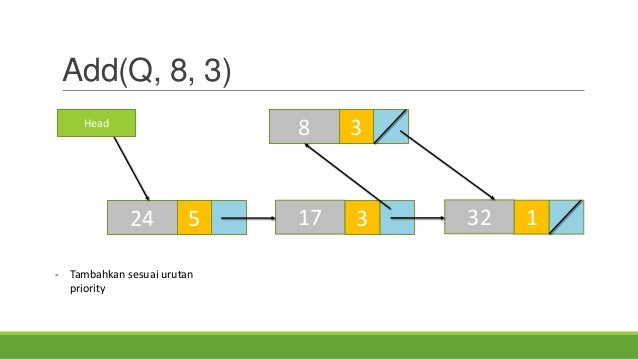

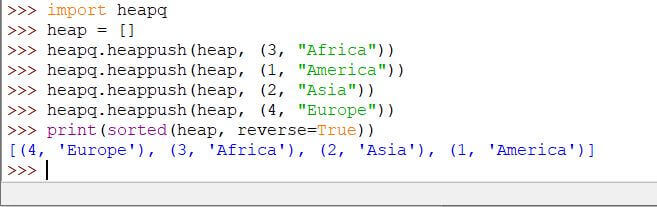

With the fibonacci heap the insertions and removeMin() calls (the pops) are amortized O(1) and the decreaseKey() call is still $O(\log V)$ as far as I understand, so the vertex part goes from $O(V \log V)$ to $O(V)$ and the edge analysis stays the same, hence you get the runtime quoted in the question and selected answer. So we get $O(E \cdot \log V)$ for all of those.Ĭombined you end up with $O(V \cdot \log (V) + E \cdot \log V)$ which becomes $\boxed$ In the worst case, one of those times will be one where we update a distance to another node. This gives $O(V \cdot \log (V))$ ( $O(\log V)$ insert() and removeMin() since in the worst case the queue has almost all of our vertices). The proof I'm used to (it would work with fibonacci heap just substitute the better runtimes for the priority queue operations) is what Jakube showed.Įvery vertex is pushed into the queue when it is first discovered and popped off when it is processed. Apparently it has some pretty crazy runtime analyses! Ī standard n-ary heap would lead to a $O((E+V)\log V)$ runtime. I'm just adding this clarification because I couldn't understand the $O(E\log V+V)$ analysis since I had never heard of the fibonacci heap (currently taking data structures and we never mentioned it). In general, it is certainly possible for a graph to have several paths of equal length between two vertices.I want to clarify that I believe the $O(E\log V+V)$ runtime requires a special priority queue implementation: the fibonacci heap. The solution is the same as this graph (probably, I have not checked to be sure) does not have multiple shortest path solutions. Python implementation of the priority queue Python implementation of Dijkstra’s algorithm One of the many interesting things that I learned from my artificial intelligence class is that the big difference between breadth-first search, depth-first search, and Dijkstra’s algorithm is the data structure that stores the vertices. The graph is the same as the random numbers used are not really random – they are pseudo-random. This happens to be the same random graph and solution as for the priority queue version of Dijkstra’s algorithm. On my computer, running this program for a random graph with 10 vertices and 25 edges $. find uncolored vertex with shortest distance from source treat node 0 as the starting vertex (arbitrary) however, also need an array to mark vertices as visited Priority queue should be its own class, but I dont know how to call a method from one class to another, I did research this and came across staticmethod but was unsure of its implementation. disallow anti-parallel arcs, maximum number of edges is N*(N-1)/2įor (int i = 0 i pred(N, -1), dist(N, INT_MAX) After a vertex is coloured, it is never visited again. This version without a priority queue can not handle negative weight edges. It can revisit a vertex after finding a subsequent shorter path. The priority queue version can handle negative weight edges (but not negative weight cycles). What is the difference, besides implementation details? With each relaxation, a vertex is pushed into the priority queue.

The main loop continues so long as relaxations continue to be found. In the priority queue version, there is no colouring of vertices.

The main relaxation loop colours the graph vertices as it goes and terminates when the entire graph has been coloured. The typical presentation of Dijkstra’s single source shortest path algorithm uses a priority queue.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed